A general chatbot answers from its training set. Izlūks AI answers from today's registry, and shows the row the answer came from.

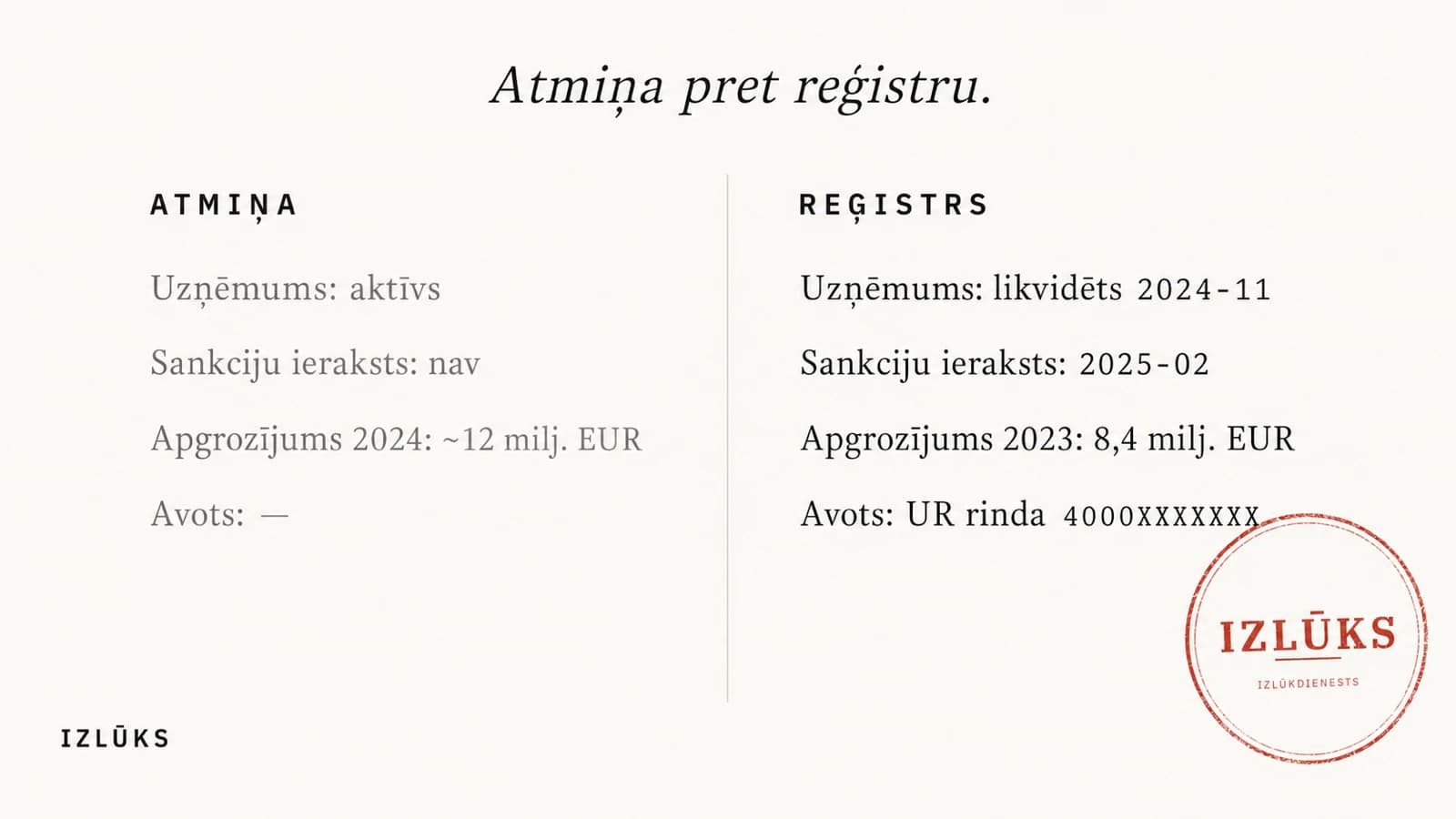

ChatGPT confidently confirms that a Latvian supplier is active and not on any sanctions list. The company was liquidated in November 2024. The sanctions entry was added in February 2025.

The answer sounds precise. In an audit it is not.

Why do the two answers differ?

Large language models answer from the data they were trained on. Training closes on a specific date and does not resume. That date is the training cutoff. For most publicly available models it currently sits three to eighteen months behind today. In that window the Latvian business registry takes in thousands of new and amended filings. Anything that arrives after the cutoff is invisible to the model: new SIA, dissolved SIA, submitted annual reports, updated sanctions packages.

The second problem is structural. The model does not know what it does not know. When a question concerns a specific Latvian SIA the training set never saw, the model generates an answer from what sounds plausible. The number lands in the right order of magnitude; the name in the right form. The technical word is hallucination; for an auditor, the answer is unusable.

Izlūks AI works differently. It does not query the model's memory; it queries the Latvian business registry directly. The answer comes from a row that exists in the database now, alongside the regcode, the date, and — if the question required SQL — the query itself. If the data is not there, the AI says so. It does not generate plausible numbers.

For a compliance analyst or audit partner, an answer is not the deliverable. A defensible answer is. The difference looks small until someone asks for the source. A general chatbot has none. Izlūks AI shows it row by row.

The Monday-morning question can be put to either. Only one has an answer that stands against today's registry.